How to Cache in AWS API Gateway

In this article, we're going to discuss how to cache the response in AWS API Gateway

Why do we use cache in AWS API Gateway?

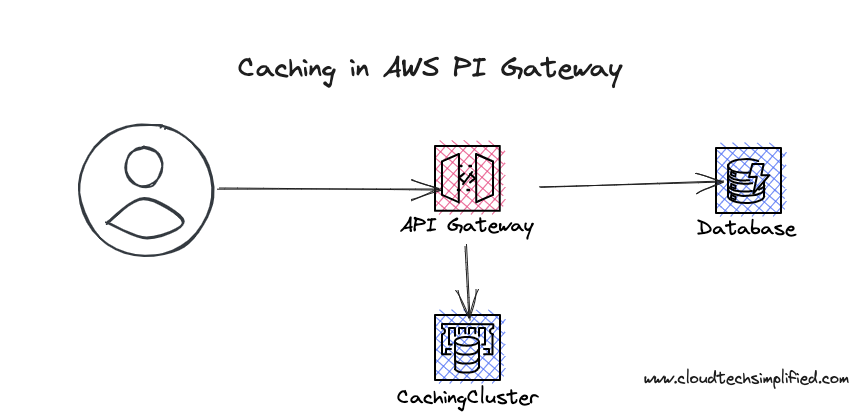

Caching is one of the most commonly used solutions to reduce the load on your backend.

Let's say you're building an API for a knowledge base for searching all of your documents. There could be scenarios where people search for popular documents such as leave policies :-) Instead of searching your database every time, you can search your db for the first time and you can cache the result for 5 minutes. Any subsequent requests which come to your API Gateway (in the next 5 mins) would return the results from the cache and your backend endpoint will not be called upon - thus reducing your load on the backend.

Having a cache cluster will incur additional costs and based on your cache cluster configuration - the price will increase. You can see the pricing here

Infrastructure

We're going to use AWS CDK for creating the necessary infrastructure. It's an open-source software development framework that lets you define cloud infrastructure. AWS CDK supports many languages including TypeScript, Python, C#, Java, and others. You can learn more about AWS CDK from a beginner's guide here. We're going to use TypeScript in this article.

REST API creation:

We're creating REST API in the below code snippet. In the deployOptions property, we're mentioning caching options.

const api = new apigw.RestApi(this, "cachingApi", {

restApiName: "Caching API",

description: "This is a caching API",

deployOptions: {

stageName: "prod",

cachingEnabled: true,

cacheTtl: cdk.Duration.seconds(10),

cacheDataEncrypted: true,

cacheClusterSize: "0.5",

},

});

cachingEnabled: This property tells whether caching is enabled for this API.

cachingTtl: This is the Time to Live period till the API will cache the response. In the above code snippet, we want to cache the response only for 10 seconds just for quick testing. However, in real world, you may cache for 2-5 minutes.

cacheDateEncrypted : This field dictates whether the cache data should be encrypted.

cacheClusterSize: This is the memory size that has to be allocated for the cache. You can specify the configuration. In the above code snippet, we've mentioned that we want to allocate 0.5 GB for the cache cluster. There are different configurations available and you can refer here for the configuration and its associated pricing.

Adding endpoint to REST API backed by Lambda Integration:

You can create an API endpoint that is backed by Lambda integration

const kbResource = api.root.addResource("kb");

const nodeJsFunctionProps: NodejsFunctionProps = {

bundling: {

externalModules: [

"aws-sdk", // Use the 'aws-sdk' available in the Lambda runtime

],

},

runtime: Runtime.NODEJS_18_X,

timeout: cdk.Duration.seconds(30), // Api Gateway timeout

};

const getCacheResultFn = new NodejsFunction(this, "getCacheResultFn", {

entry: path.join(__dirname, "../lambdas", "get-cache-result.ts"),

...nodeJsFunctionProps,

functionName: "getCacheResultFn",

});

kbResource.addMethod("GET", new apigw.LambdaIntegration(getCacheResultFn));Below is the lambda function - which simulates querying the database and returning the results.

export async function handler(event: any, context: any) {

const now = Date.now();

console.log("Lambda is called", now);

// search your database and return the records based on the query

return {

statusCode: 200,

body: JSON.stringify({

results: [

{

doc: "www.yourdomain.com/some-page",

date: now,

},

],

}),

};

}Please note that I'm returning the date timestamp in the response so that we can check whether the response is served from the cache or from lambda.

Deployment & Testing

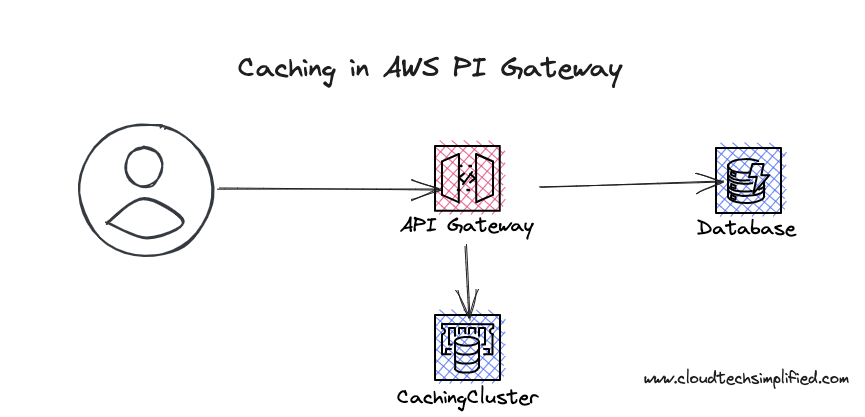

You can deploy the code by executing the command cdk deploy . Please note that it may take a few minutes to deploy your cache cluster. If you go to API Gateway in the AWS console - you can see that the cache status is CREATE_IN_PROGRESS.

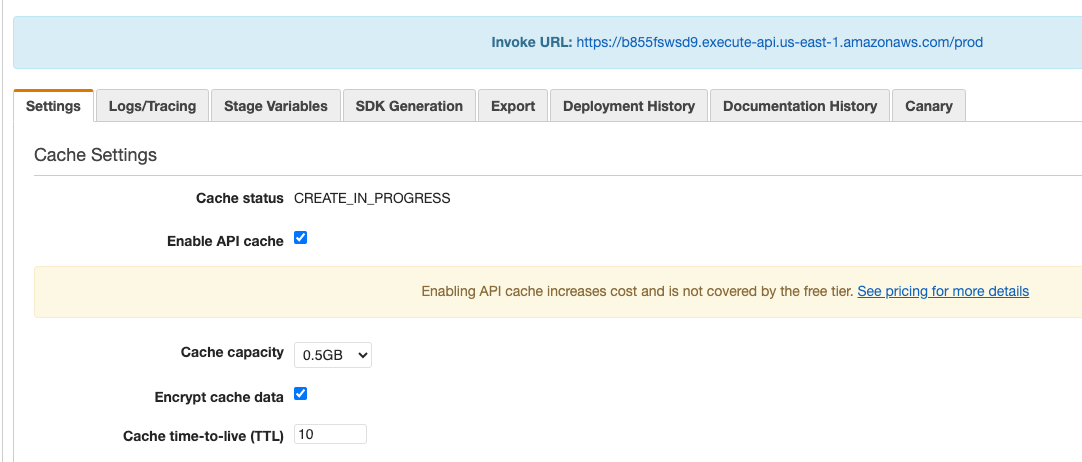

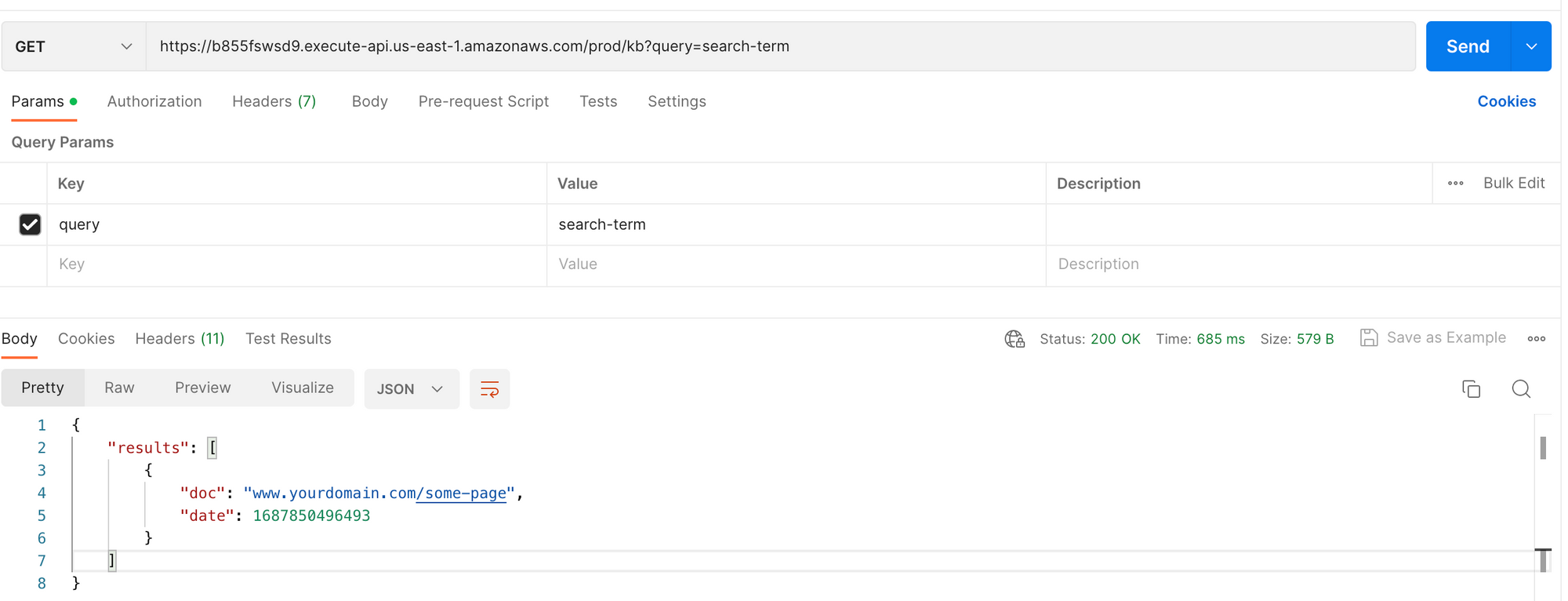

After a few minutes, the status would be changed to AVAILABLE. When you try to access the endpoint, you may be able to see the response. When you access the endpoint, there will be no cache and your lambda will be hit and the response will be returned from lambda. If you access the same endpoint next time, it will be served from the cache. You can confirm this - as the response will have the same timestamp.

If you're just testing, please bring down the stack so that you can avoid the costs.

Please let me know your thoughts in the comments.